These html pages are based on the

PhD thesis "Cluster-Based Parallelization of Simulations on Dynamically Adaptive Grids and Dynamic Resource Management" by Martin Schreiber.

There is also

more information and a PDF version available.

8.1 Invasion with OpenMP and TBB

Our Invasive Computing extensions are build on existing functionality of

OpenMP and

Intel TBB .

Both parallelization models offer parallelization via pragma language extensions or via embedding into

the C++ language with a library, respectively. A parallelization on shared-memory has similar

restrictions compared to distributed-memory systems which are not considered in HPC standard

threading libraries so far:

- On shared-memory systems, an application can always be started using all available

resources. However, an application should not be started when some of the accessed

computing resources are used by other applications. Otherwise this leads to preemption

and caches shared among both running applications [KCS04], hence leading to a severe

loss of performance. This is in particular important for urgent computing (see e.g.

[BNTB07]) with requirements of starting an application despite other applications

already use the required resources.

- A changing scalability of algorithms cannot be considered in an a-priori thread allocation.

Our DAMR simulations introduced in Part III with their changing workload over the

simulation leads to a strongly varying scalability over runtime. For significantly smaller

workloads, see e.g. Tsunami parameter studies, this also leads to an underutilization

of resources if not dynamically and efficiently shared with other concurrently running

applications.

The applications considered in this work are based on time-stepping schemes. Here, we assume

a loop, iterating over the time steps required for the simulation and the parallelization

only inside the loop. Due to insufficiencies of OpenMP and TBB to change the number of

threads inside a parallel region (see e.g. [Ope08] for OpenMP), we allow changes of threads

only at the very beginning of each loop, thus only between each simulation time step. To

support invasion of cores, we then have to (a) change the number of threads capable of

work stealing and (b) set the pinning of the work stealing threads to physical compute

cores.

-

(a)

- For OpenMP, we set the number of threads with omp_set_num_threads(#cores), and using

TBB, the worker threads are set by tbb::scheduler_init(#cores).

-

(b)

- Regarding the pinning, we accomplish this by executing a single task for each thread,

e.g. using a parallel for loop over the number of available cores and a chunk size of 1. For

TBB, we first set the affinity of each task to the corresponding thread which is used to

invade a core. In each thread, mutices are then used to avoid work stealing. Otherwise, such

work stealing can result in unpinned threads or even a thread pinned to the wrong core.

Inside the task, the affinity of the executing thread is then set to the invaded core, based

on information provided by the RM.

We only update the number of active threads in each application and their pinning to

cores every time if there’s a change in resources either in the number of threads or their

pinning.

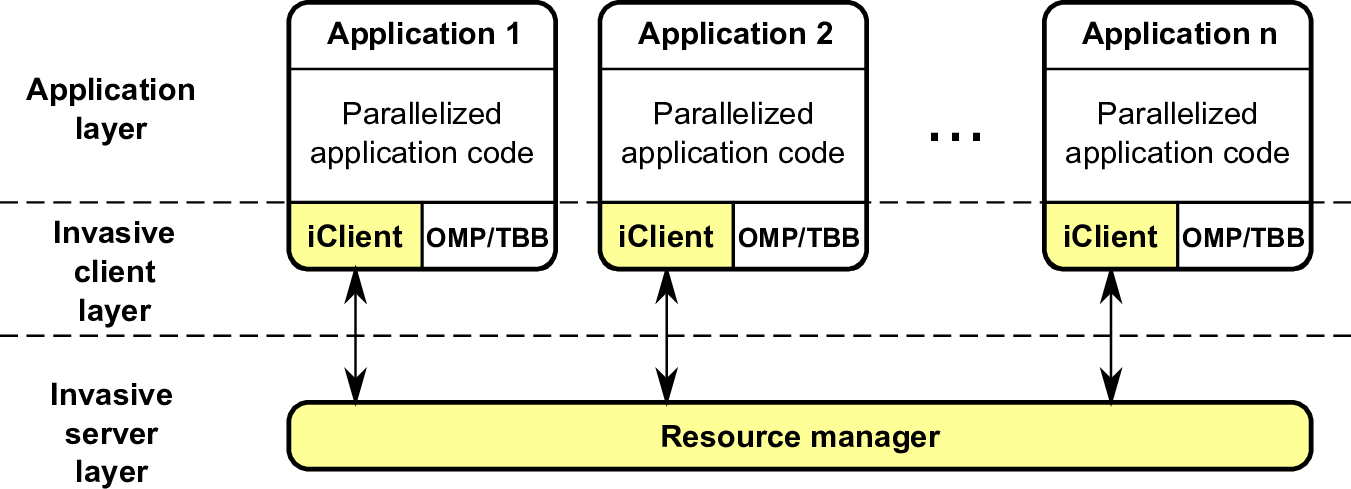

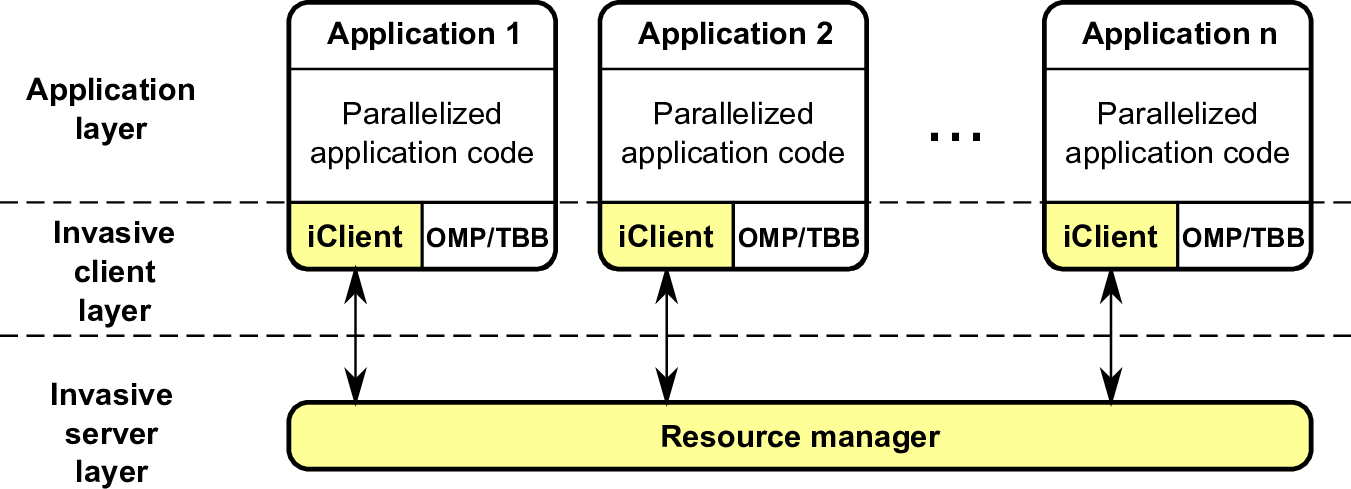

Considering the previously mentioned requirements, this leads to a software design presented in

Fig. 8.1. This extends each application with an invasive client layer which offers the invasive

commands which are discussed in the next section. OpenMP and TBB are supported by this

client-side extension. The resource manager then orchestrates the resources for all registered invasive

applications.